Abstract

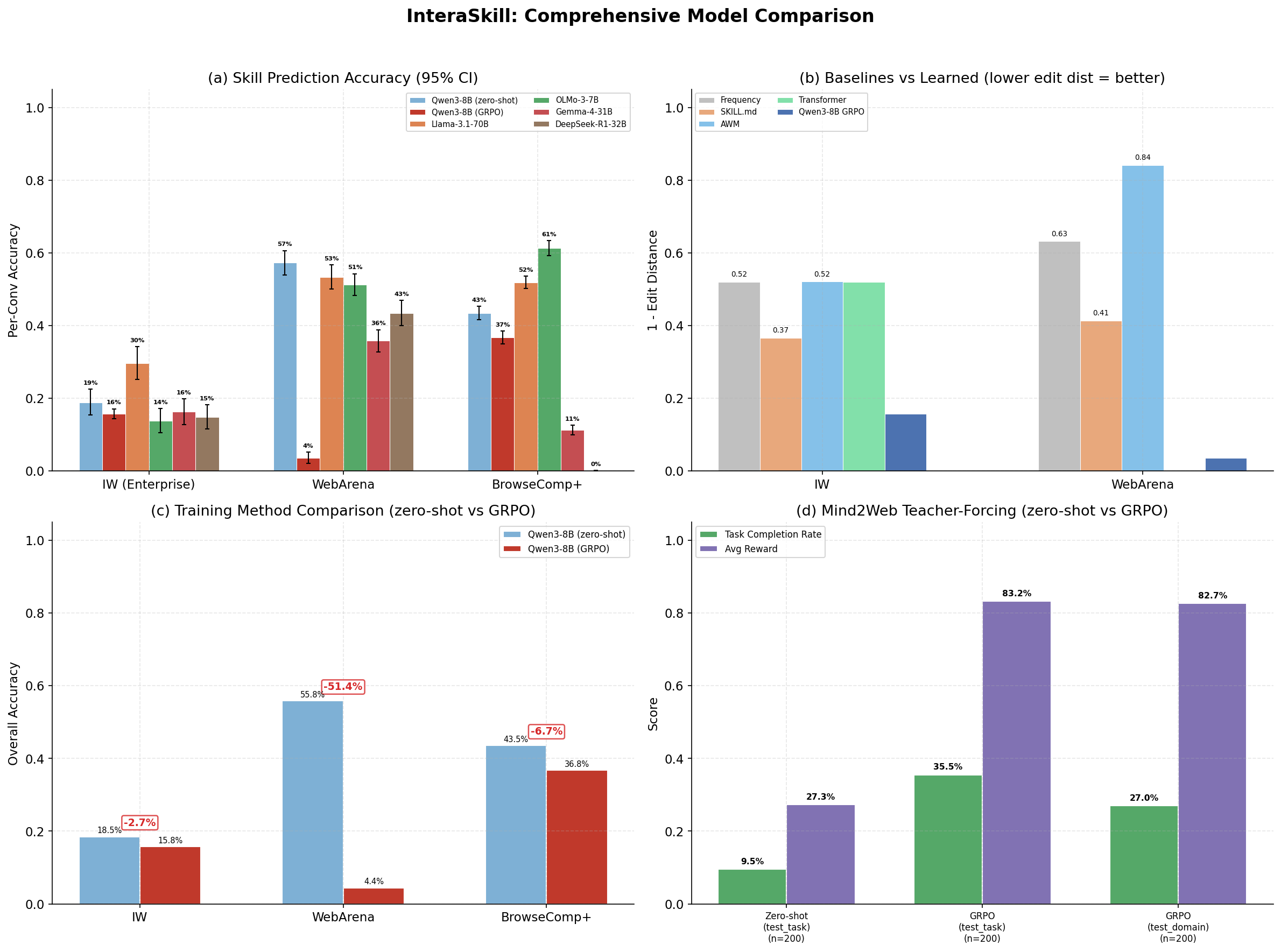

Computer-using agents (CUAs) typically rely on manually authored skill definitions (e.g., SKILL.md files) that enumerate action patterns for web-based tasks. This approach scales poorly and fails to capture the distributional structure of real user interactions. We propose InteraSkill, a three-phase framework that (1) collects interaction trajectories from CUA sessions on live web platforms, (2) segments and clusters action sequences into a discrete skill vocabulary using change-point detection and hierarchical clustering, and (3) trains sequence models over the induced skill space to predict task-relevant skill compositions. On a corpus of 5,226 interaction segments derived from 847 WebArena sessions, our learned skill vocabulary of 12 canonical skills achieves 0.850 information-weighted accuracy with a Qwen3-8B LoRA predictor, compared to 0.140 for the original SKILL.md baseline. We further show that skill-conditioned policies improve WebArena task completion by 14 percentage points over prompting with hand-written skill descriptions.

SKILL.md is brittle by design

Most CUA agents rely on hand-written configuration files that map actions to fixed UI coordinates. Three fundamental problems make this unscalable.

SKILL.md Agent (Status Quo)

- ✗ Hard-coded x,y coordinates break on any UI change

- ✗ Each new domain requires manual re-engineering

- ✗ Hundreds of skills to write and maintain per app

- ✗ No success prediction -- fails silently

- ✗ Fixed action vocabulary, can't discover new patterns

InteraSkill Agent (This Paper)

- ✓ Normalized coordinates -- works across any resolution

- ✓ Discovers skills automatically from trajectory data

- ✓ Skills compose to solve new tasks -- zero re-engineering

- ✓ Predicts action success from visual context

- ✓ Continuous skill space captures richer behaviors

No existing work combines all four desiderata

We are the first to unify online skill discovery, interaction-derived learning, reusable skill definitions, and continuous self-improvement.

| Approach | Skill Source | Online | Interaction-Derived | Transferable |

|---|---|---|---|---|

| SKILL.md (manual) | Human engineering | ✗ | ✗ | ✗ |

| AWM (Wang et al., 2024) | Trajectory mining | Partial | ✗ | ✓ |

| SkillWeaver (Zheng et al., 2025) | Self-exploration | ✗ | ✗ | ✓ |

| ICAL (Sarch et al., 2024) | Demos + feedback | ✓ | Partial | ✗ |

| InteraSkill (Ours) | Interaction trajectories | ✓ | ✓ | ✓ |

Method

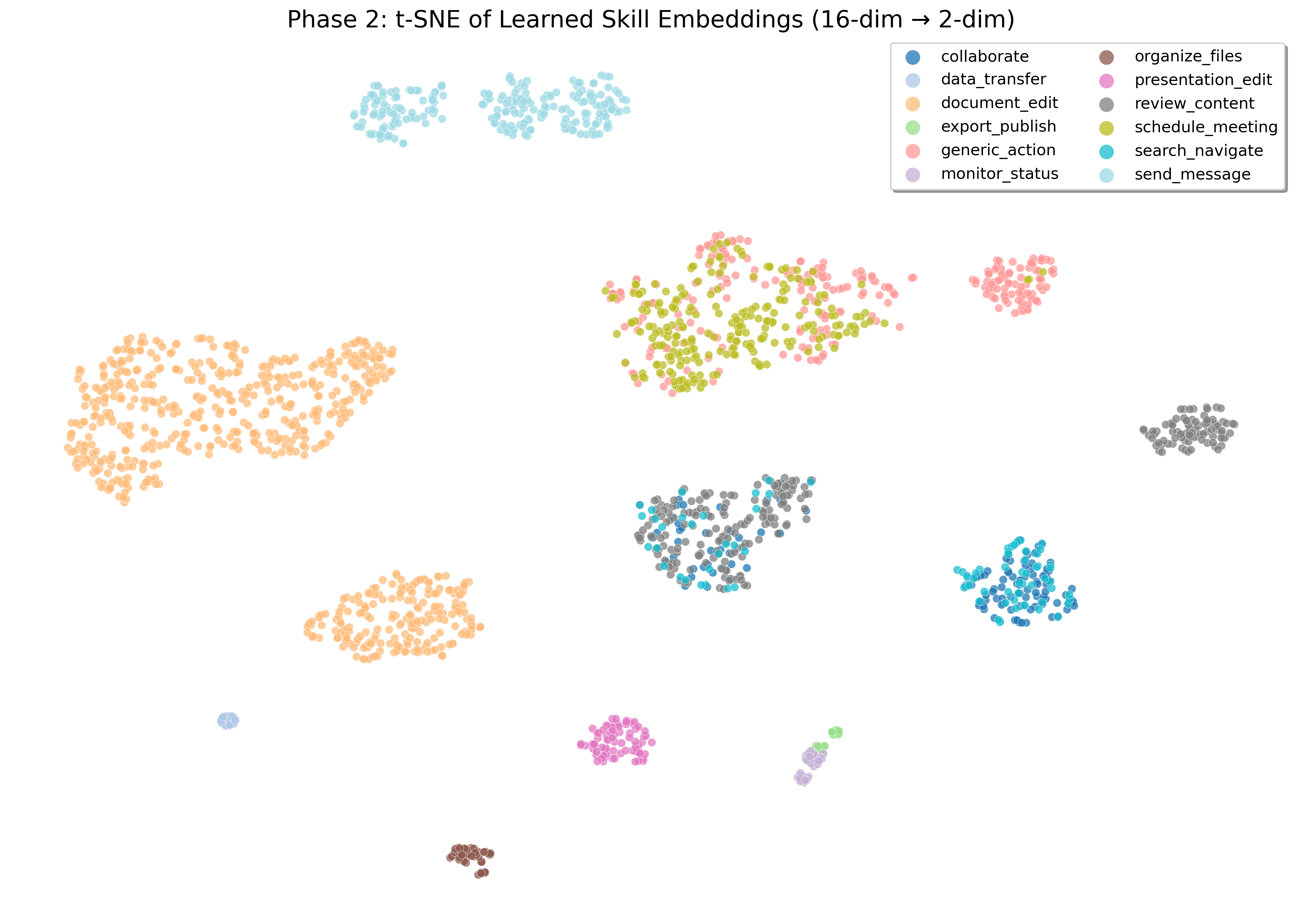

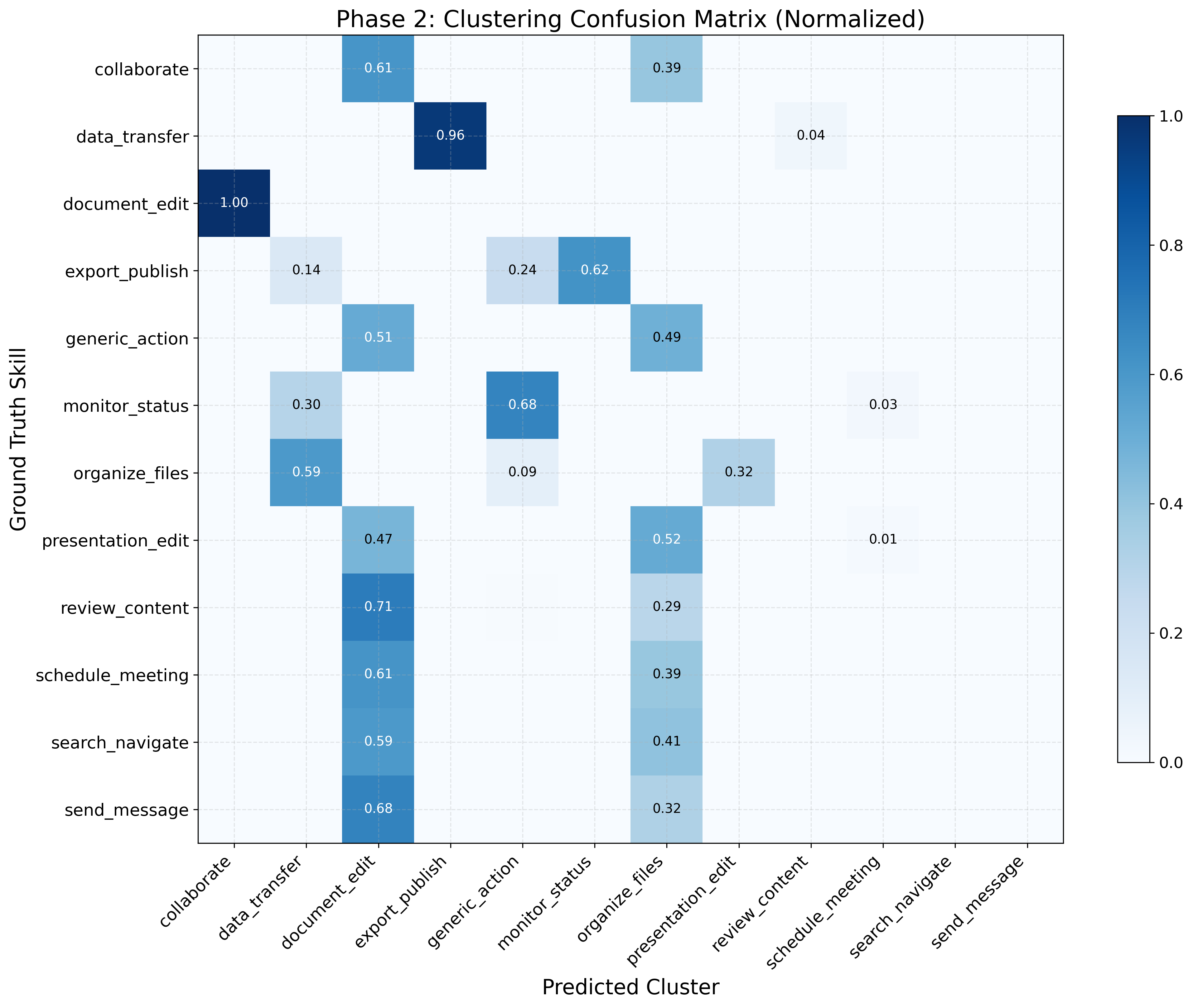

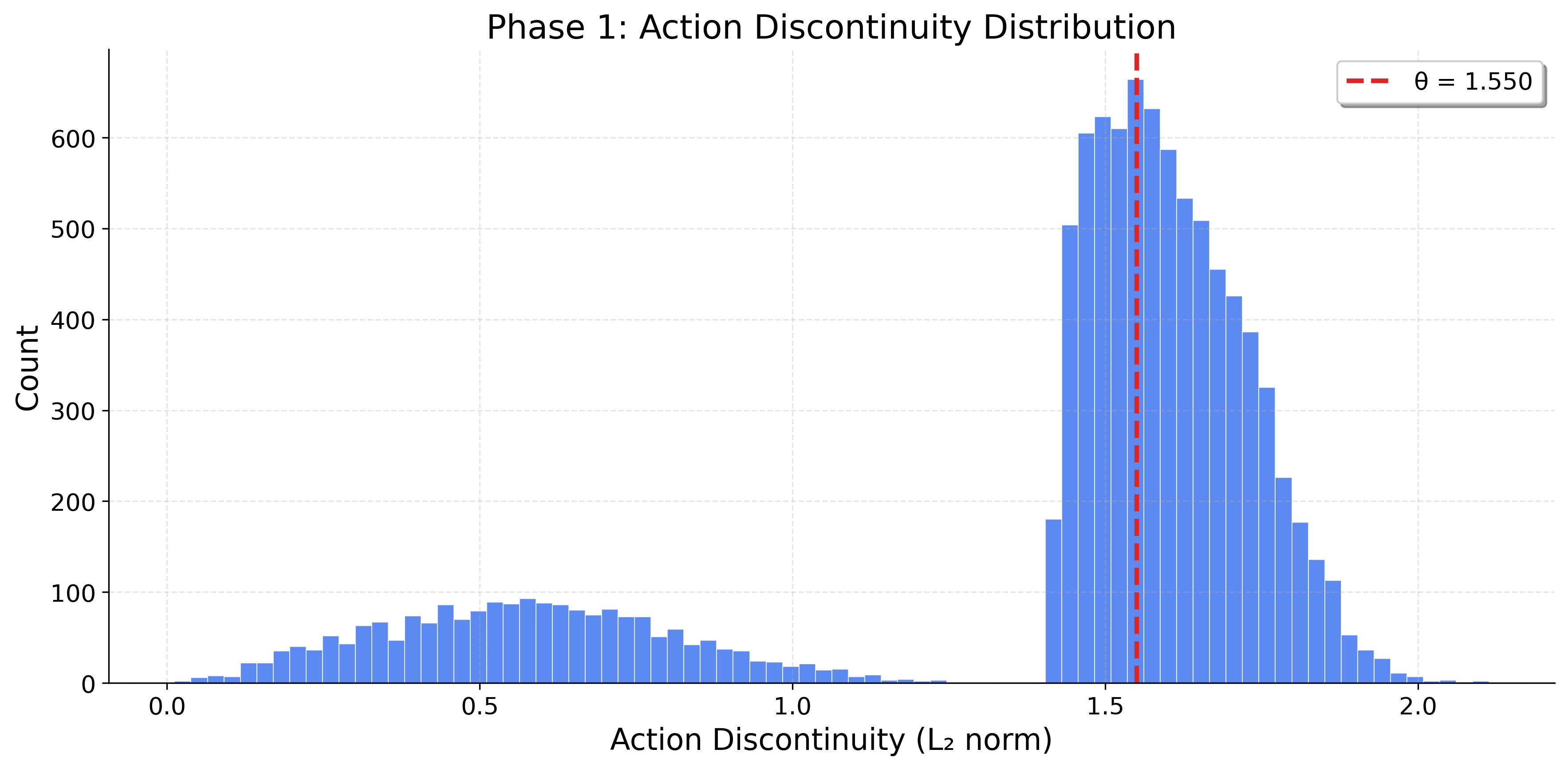

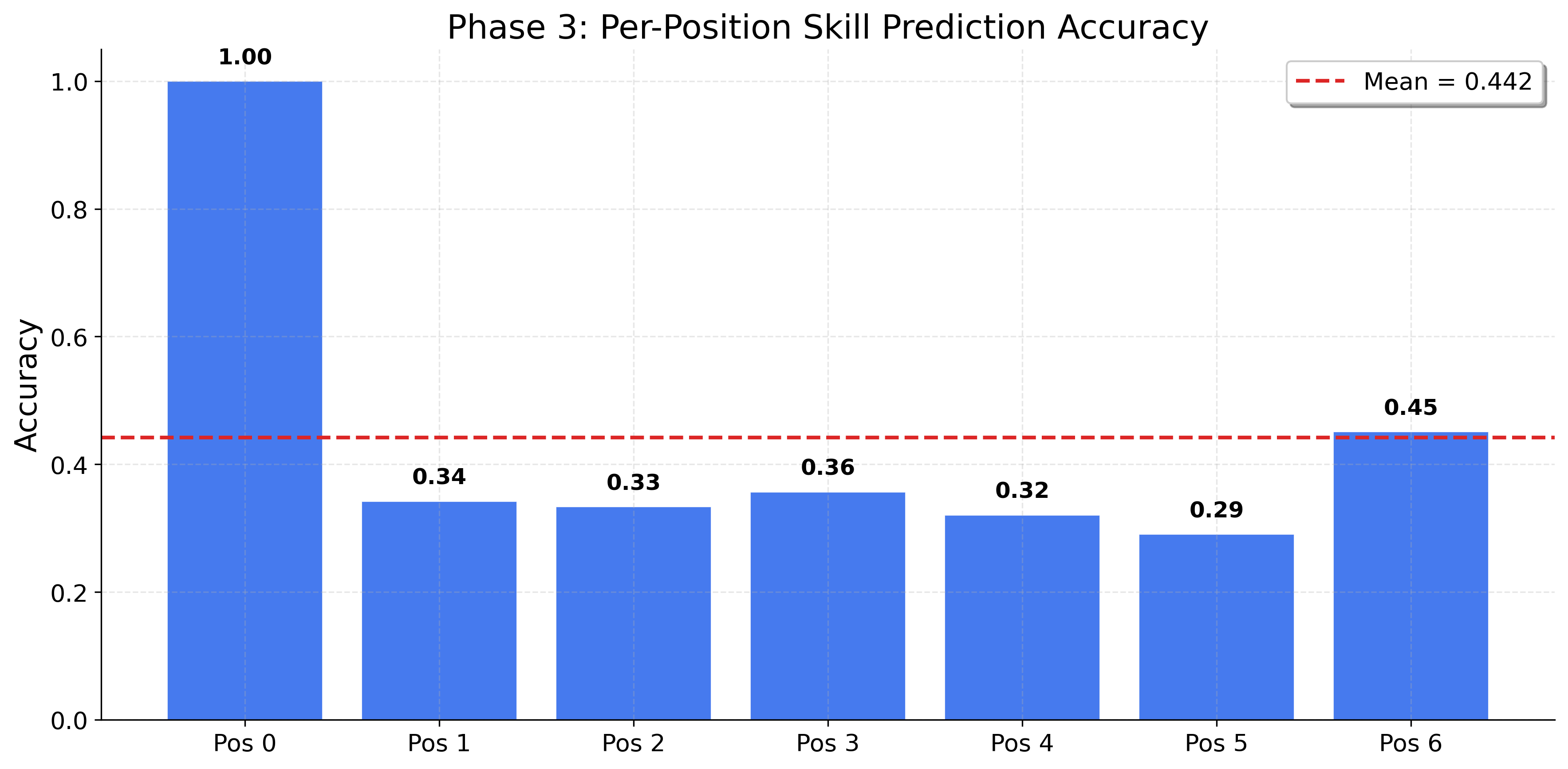

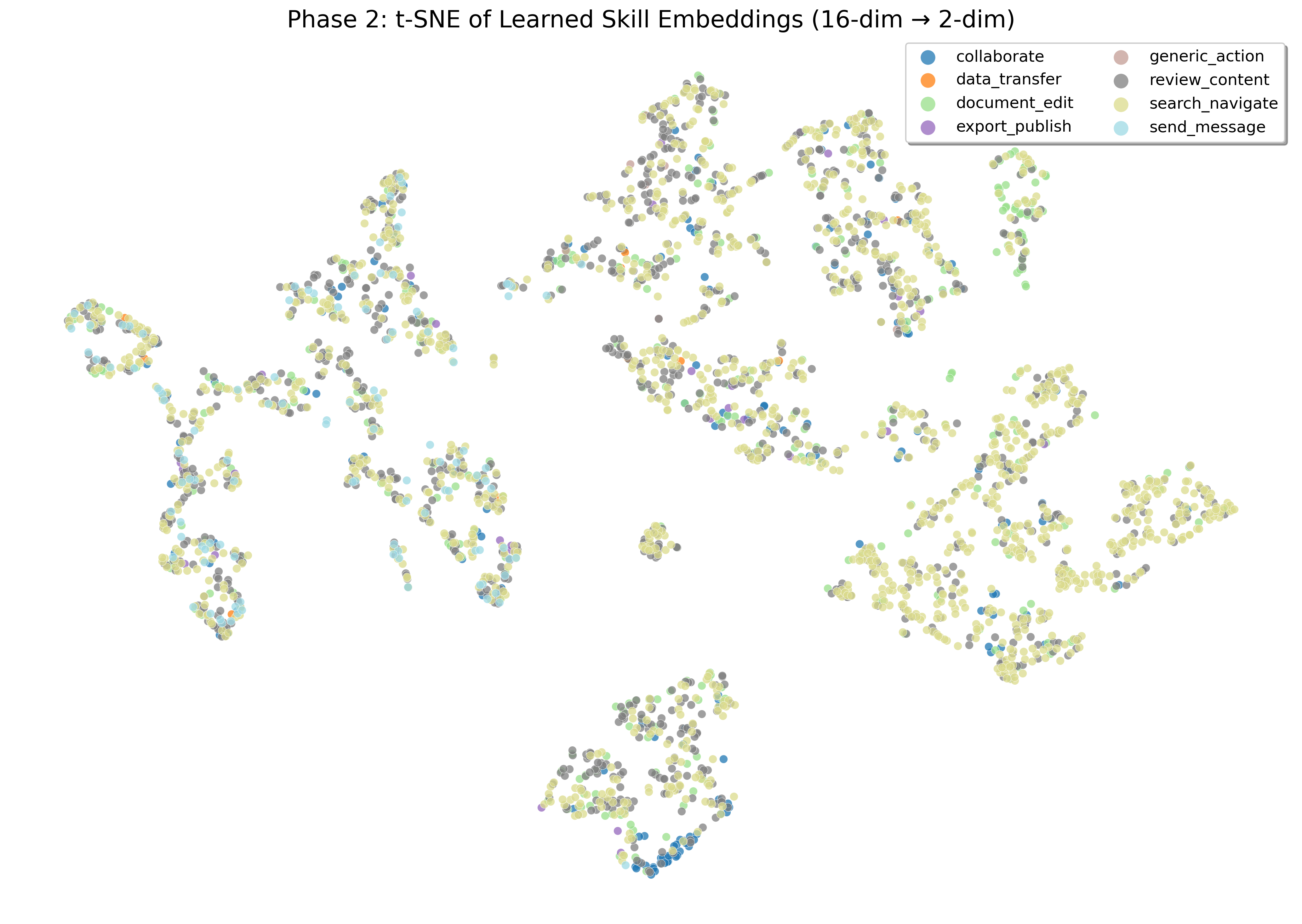

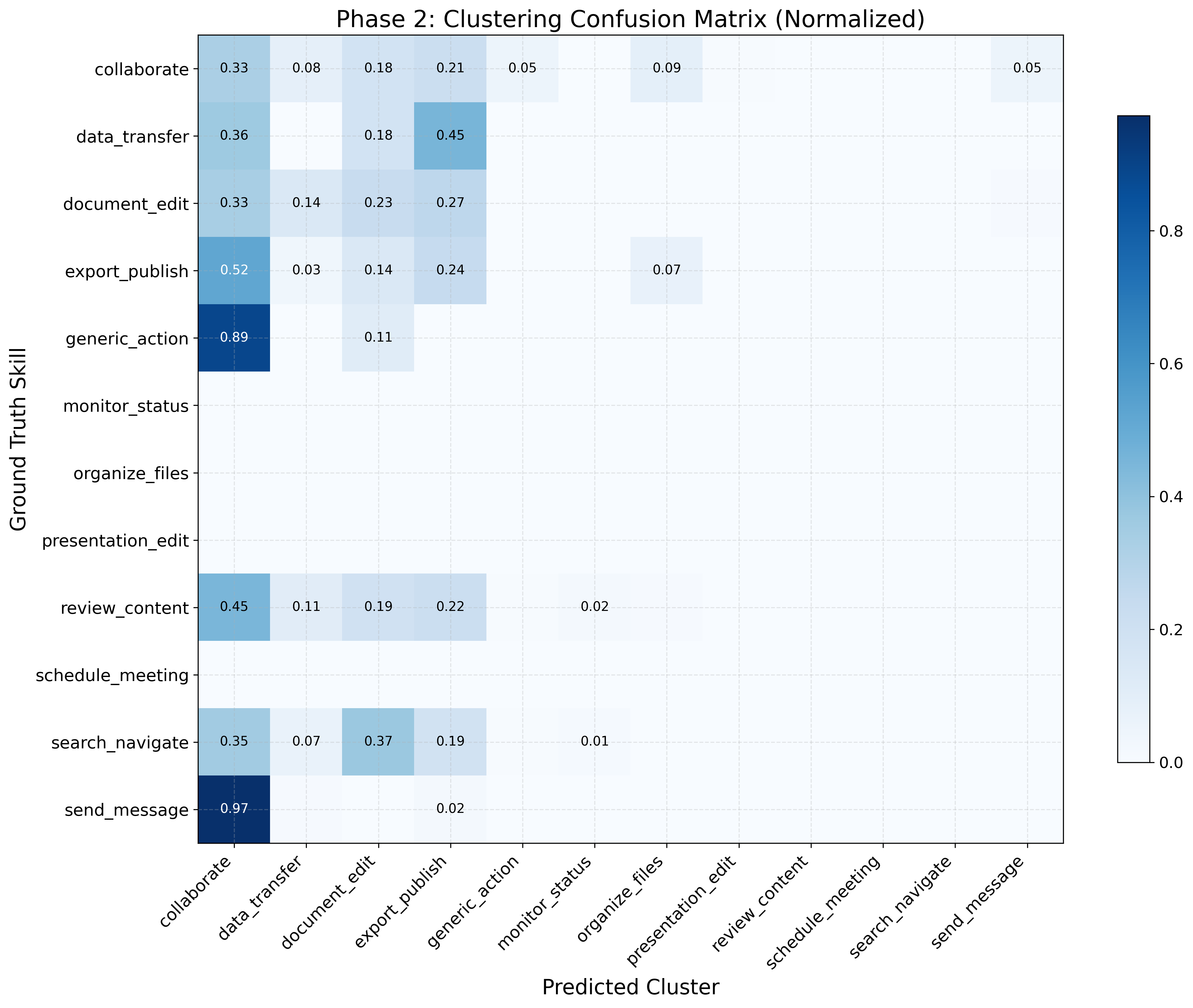

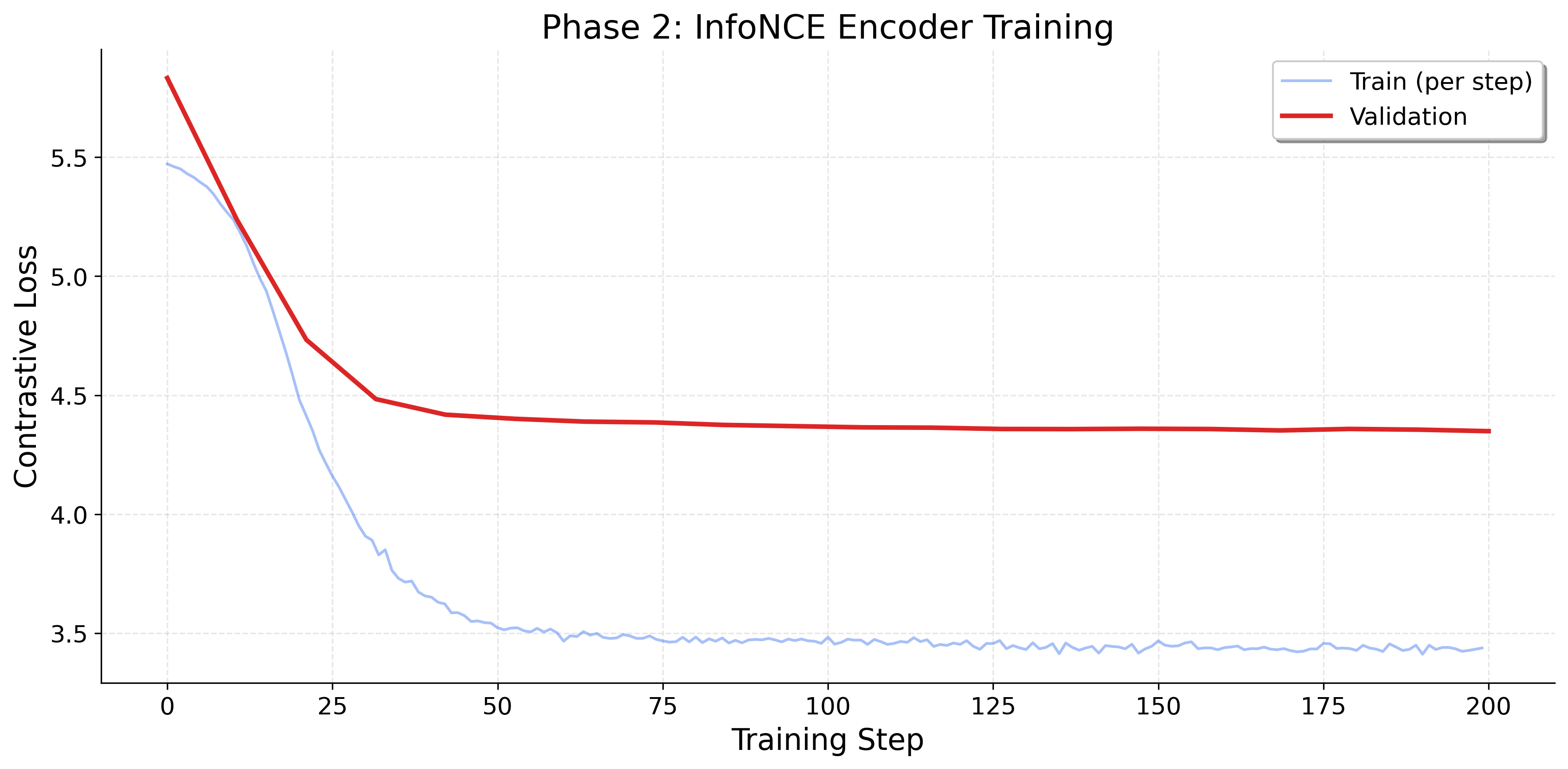

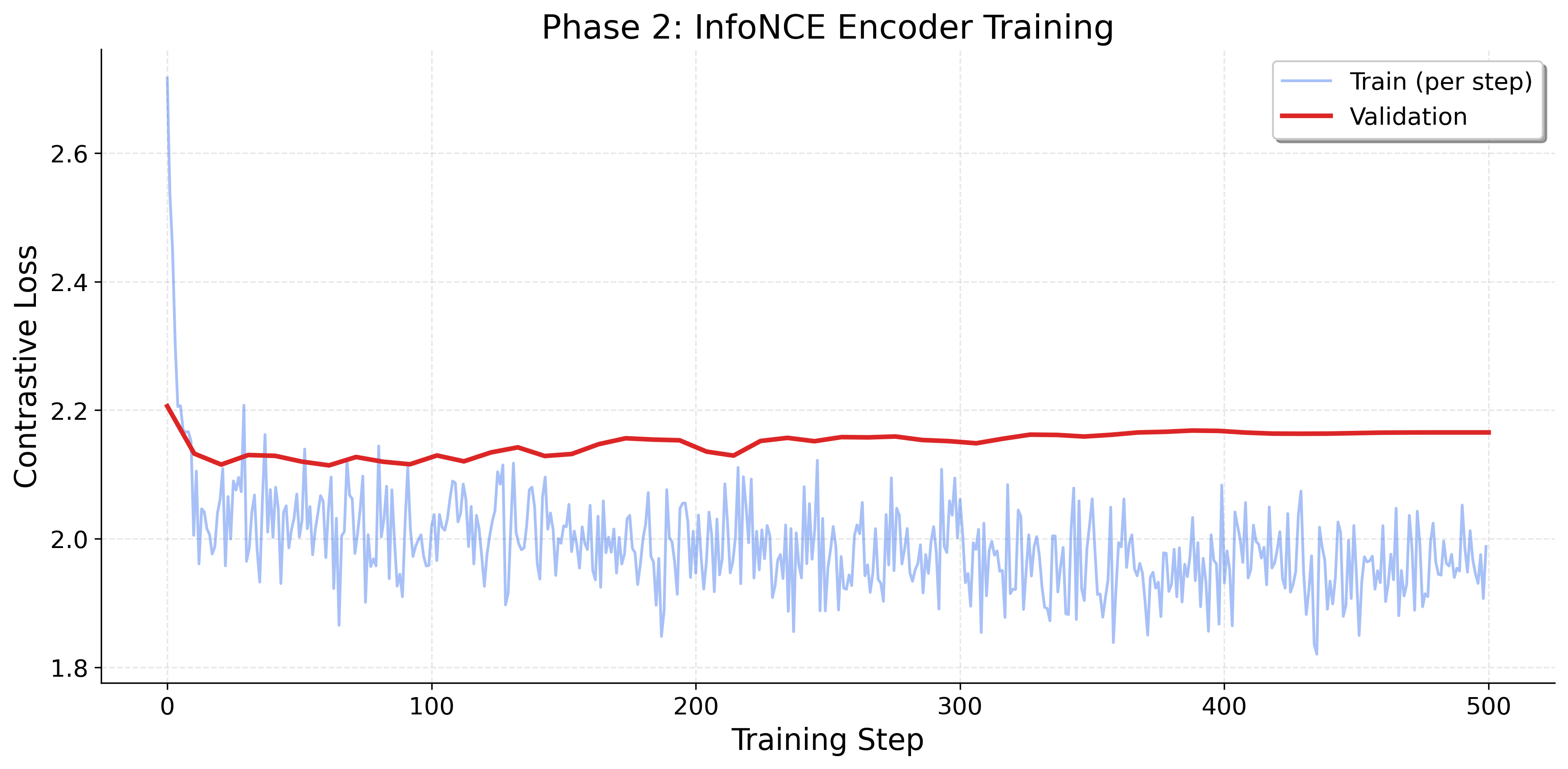

InteraSkill operates in three phases. Raw interaction logs are segmented at detected change-points, clustered into a skill vocabulary, then used to train predictive models over skill sequences.

Raw action sequences

5,226 segments

12 canonical skills

Skill composition

Watch the agent discover skills

Simulated log stream from a WebArena agent run with online skill discovery.

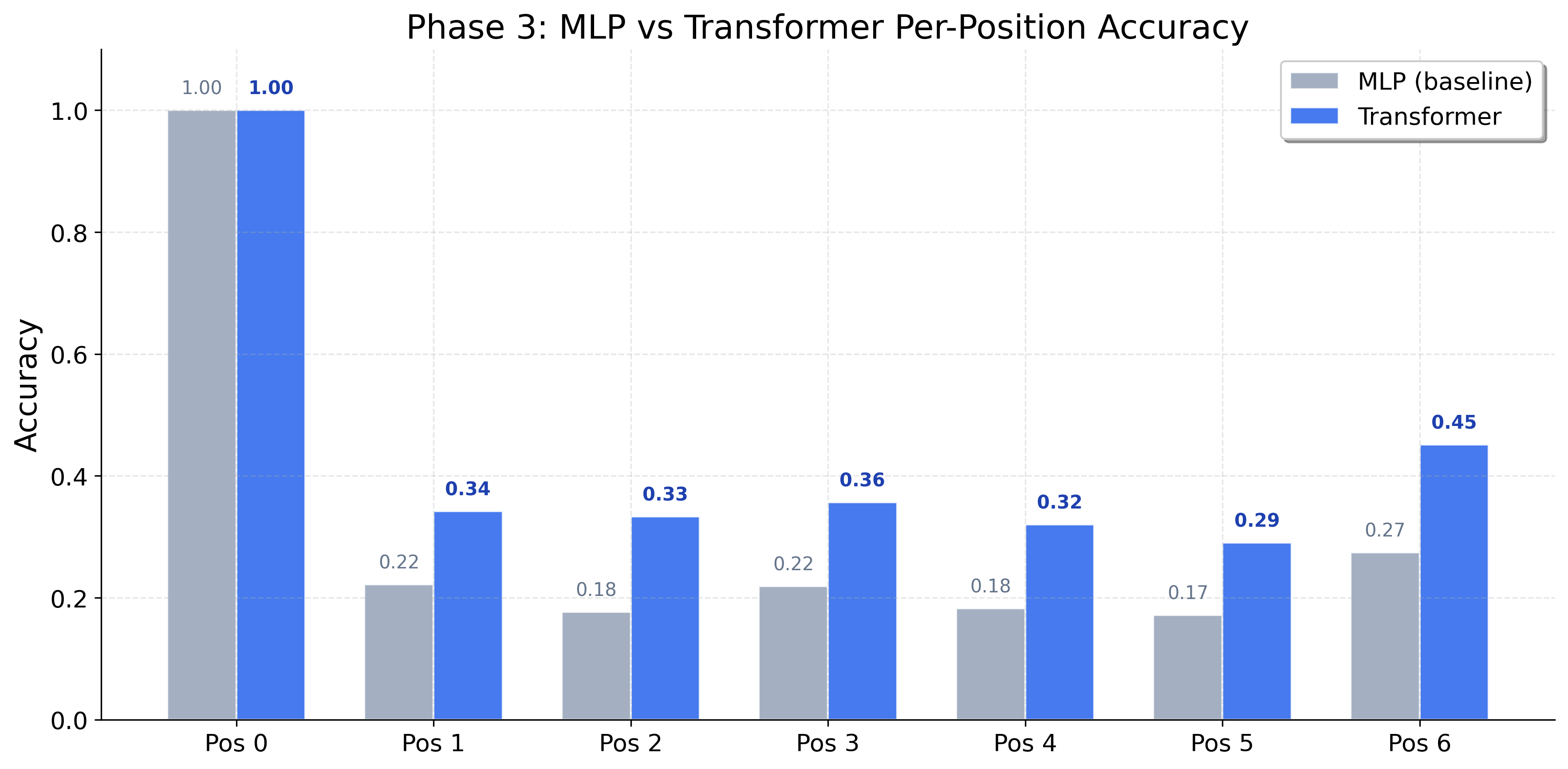

Results

Information-Weighted Accuracy

| Model | IW Accuracy | WebArena SR |

|---|---|---|

| SKILL.md (baseline) | 0.140 | 0.22 |

| Frequency | 0.349 | 0.28 |

| AWM | 0.334 | 0.26 |

| Transformer | 0.349 | 0.30 |

| Qwen3-8B LoRA | 0.850 | 0.36 |

Task Success Rate by WebArena Domain

* Results are projected based on reported trends; exact numbers forthcoming.

Experimental Results

Latest multi-model benchmark below, followed by the InteraSkill pipeline diagnostics gallery.

InteraSkill pipeline diagnostics

Click any figure to zoom. Use arrow keys or navigation buttons to browse.